Introduction

This is the second project I already had when I posted Updates to project.

Here is its repository: Machine Learning project on GitHub1.

I started it as the Artificial Intelligence hype was going stronger, just to have a project on a domain that’s of big interest nowadays.

At that point I was thinking to continue it with convolutional networks and at least recurrent networks, not necessarily going up to LLMs or some fancy diffusion model, but I ended it up (for now?) because it’s already complex enough and covers quite a bit of the fundamentals.

By the way, since it’s about machine learning, I generated the header image with Stable Diffusion.

Some details

I’m not going to describe here all the details about the project, I think there are enough already in the README, so please visit the project on GitHub1.

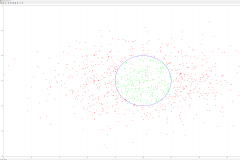

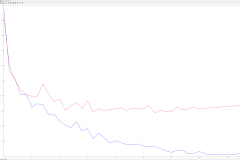

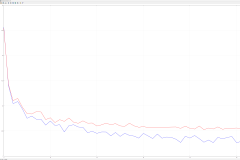

Without much ado, here are some charts I generated with Gnuplot from data obtained with the project:

There is more description about those in README.

Very shortly, I started in a typical ‘tutorial’ manner, with simple linear regression, showing how you can do polynomial (and others) regression out of it, going through logistic regression, generalized linear models, then to simple neural networks (multilayer perceptron).

For data sets I used typical data used in tutorials/books, the iris dataset and the EMNIST digits data. When going to neural networks, I first solved the very well known XOR problem. Despite implementing a very simple neural network, I implemented many stochastic gradient descent algorithms, including AdamW2. Also the neural network has dropout3 and batch normalization4. There are various loss functions available, various activation functions, various weights initializations, so one could experiment with them to find the best combination.

As such, using an ensemble of neural networks, I reached about 99.55% accuracy on the EMNIST digits test set, which is quite good.

Conclusion

This concludes the blog entry about this project. I’m too lazy to write more here, so please visit the project’s README on GitHub if you want to find more about it.

It’s just another neural network ‘from scratch’ (well, it uses Eigen5 for linear algebra stuff) project, as many others.

- Machine Learning The Machine Learning repository on GitHub ↩ ↩

- AdamW paper The AdamW paper on Arxiv ↩

- Dropout The dropout paper ↩

- Batch normalization The batch normalization paper on Arxiv ↩

- Eigen The matrix library ↩